Machine Learning for Plasma Etch Optimization: How AI Is Transforming Process Development

By NineScrolls Engineering · 2025-12-10 · 23 min read · Nanotechnology

Developing a plasma etch process has traditionally been an exercise in expert intuition combined with painstaking experimentation. A typical ICP-RIE process has 6–10 independently adjustable parameters — ICP power, bias power, pressure, gas flows, temperature, and more — creating a vast parameter space that is impractical to explore exhaustively. Researchers often rely on one-factor-at-a-time (OFAT) experiments or design-of-experiments (DOE) approaches, but both have significant limitations when dealing with complex, nonlinear process interactions.

Machine learning (ML) and artificial intelligence (AI) are changing this landscape. From accelerating recipe development to enabling real-time process control, data-driven approaches are making plasma etching smarter, faster, and more predictable. This article explores how ML is being applied to plasma etch processes, what tools and methods are most relevant for research labs, and how these approaches can enhance your existing workflow.

Why Plasma Etching Is Ripe for Machine Learning

Several characteristics of plasma etch processes make them particularly well-suited for ML approaches:

High dimensionality: With many interacting process parameters, the relationship between inputs (recipe settings) and outputs (etch rate, selectivity, profile angle, uniformity, surface roughness) is inherently multivariate and nonlinear. ML models excel at capturing these complex relationships.

Data-rich environment: Modern etch tools generate extensive process data — RF power readings, pressure traces, gas flow logs, optical emission spectra, and endpoint signals. This data is often logged but underutilized. ML transforms this data into actionable process intelligence.

Expensive experiments: Each etch run consumes materials, time, and tool capacity. ML can reduce the number of experiments needed to find an optimal process by intelligently selecting the most informative experiments to run.

Reproducibility challenges: Plasma processes can drift over time due to chamber conditioning, target erosion, and other aging effects. ML models trained on process data can detect and compensate for these drifts before they cause yield loss.

Key Applications of ML in Plasma Etching

1. Process Recipe Optimization

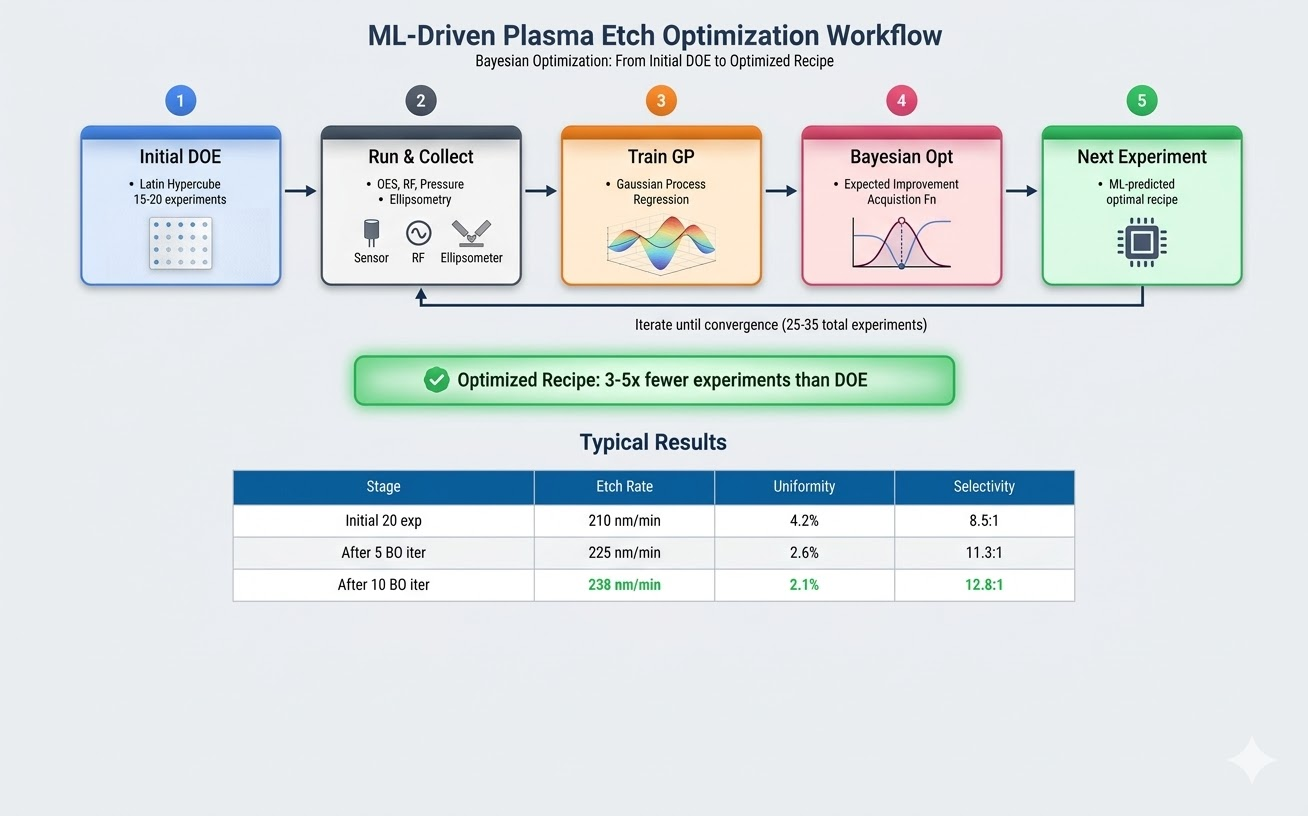

The most immediate application is using ML to find optimal etch recipes faster than traditional DOE approaches. The workflow typically involves:

Data collection: Run an initial set of experiments (20–50 runs) spanning the parameter space of interest. Measure key outputs for each run.

Model training: Train a regression model (Gaussian process, random forest, or neural network) to predict etch outputs from recipe inputs.

Optimization: Use the trained model to identify optimal operating points — either maximizing a single metric or finding the best trade-off among competing objectives (e.g., high etch rate vs. low damage).

Bayesian optimization is particularly powerful here. Instead of requiring a dense grid of experiments, it uses the ML model’s uncertainty estimates to suggest the next most informative experiment. Studies have shown that Bayesian optimization can find near-optimal etch recipes with 3–5× fewer experiments than conventional DOE.

Case Study — GaN HEMT Gate Recess Optimization: A research group at a U.S. national laboratory needed to optimize an ICP-RIE gate recess process for GaN HEMTs, balancing etch rate, surface roughness, sidewall angle, and nitrogen vacancy density across 6 recipe parameters. A traditional full factorial DOE would have required 729 experiments; even a fractional factorial needed 81 runs. Using Bayesian optimization with a Gaussian process surrogate model, they identified a Pareto-optimal recipe in only 35 experiments — achieving < 0.5 nm RMS surface roughness at an etch rate of 80 nm/min with a sidewall angle within 1° of vertical.

2. Virtual Metrology and Real-Time Prediction

Virtual metrology uses in-situ sensor data (optical emission spectroscopy, RF impedance, pressure readings) to predict etch outcomes in real time — without waiting for post-etch measurement.

By training ML models on paired datasets of sensor data and metrology results, researchers can:

- Predict etch rate and uniformity from OES spectral features during the etch

- Detect process excursions before they produce defective wafers

- Enable run-to-run control by adjusting recipe parameters based on real-time predictions

For research labs, the most accessible entry point is OES-based virtual metrology. Optical emission data is rich, high-dimensional, and readily available on most ICP and RIE systems. Principal component analysis (PCA) combined with simple regression models can provide surprisingly accurate real-time etch rate predictions.

Case Study — OES-Based Virtual Metrology: An R&D study demonstrated that a random forest model trained on 200 OES spectra collected during SiO₂ etch in a production ICP chamber could predict post-etch remaining thickness with an accuracy of ±0.8 nm — comparable to standalone spectroscopic ellipsometry measurements. Using the full OES spectrum (400 wavelengths) rather than a handful of manually selected emission lines improved prediction accuracy by 40%.

3. Endpoint Detection Enhancement

Traditional endpoint detection relies on monitoring a single OES wavelength or reflectometry signal for a characteristic change when the etch reaches an interface. ML-enhanced endpoint detection uses the full OES spectrum (hundreds of wavelengths simultaneously) to detect more subtle transitions, such as:

- Thin etch-stop layers (< 5 nm)

- Compositional gradients rather than sharp interfaces

- Partial exposure of underlying layers in non-uniform processes

Algorithms like change-point detection, hidden Markov models, and convolutional neural networks applied to spectral time series can catch transitions that single-wavelength monitoring would miss.

Case Study — Sub-5 nm Endpoint Detection: Researchers demonstrated that a 1D convolutional neural network (CNN) trained on time-resolved OES data could reliably detect the endpoint of a gate oxide etch when only 2 nm of a 5 nm HfO₂ layer remained — a feat impossible with conventional single-wavelength endpoint monitoring. The CNN learned to identify subtle correlations across 50+ emission wavelengths that collectively signaled the approaching interface.

4. Digital Twins of Etch Chambers

A digital twin is a computational model that mirrors the behavior of a physical etch chamber. It combines physics-based models (plasma kinetics, gas-phase transport, surface reactions) with ML models trained on experimental data to create a comprehensive simulation environment.

Digital twins enable:

- Virtual experimentation: Test new recipes computationally before running physical experiments

- Chamber matching: Understand and compensate for differences between nominally identical etch tools

- Predictive maintenance: Forecast when chamber components need replacement based on process drift patterns

- Transfer learning: Accelerate recipe development on a new tool by leveraging the digital twin from an existing, well-characterized system

Case Study — Chamber Matching with Digital Twins: Using neural network-based digital twins to match etch performance across multiple production chambers, fine-tuning with just 10–15 runs on a second chamber achieved recipe transfer with < 2% etch rate deviation — compared to the 5–8% deviation typically seen when directly copying recipes between chambers.

5. Feature-Scale Profile Prediction

Predicting the 3D shape of etched features (trench profiles, via sidewalls, undercut geometry) from process parameters is one of the most challenging problems in etch modeling. Traditional feature-scale simulations (Monte Carlo methods, level-set methods) are computationally expensive and require detailed knowledge of surface reaction probabilities.

ML surrogate models trained on simulation data or experimental cross-sections can predict feature profiles orders of magnitude faster than physics-based simulations. This enables rapid exploration of how recipe changes affect feature geometry — particularly valuable for developing high-aspect-ratio etch processes.

6. Predictive Maintenance and Chamber Health Monitoring

Beyond process optimization, ML is proving valuable for predicting when etch chamber components will fail or degrade. By monitoring trends in process sensor data (RF reflected power, matching network positions, pressure stability, OES drift), ML models can forecast maintenance needs days or weeks in advance.

Case Study — RF Match Degradation Detection: A research group developed a long short-term memory (LSTM) neural network trained on 6 months of RF matching network data from an ICP-RIE system. The model correctly predicted 4 of 5 match failures with an average lead time of 72 hours and zero false positives. Implementation required only standard process log data — no additional sensors were needed.

ML Tool Guide for Research Labs

You don’t need a data science team to start applying ML to your etch processes:

Beginner Level (No ML Experience Required)

- JMP (SAS) — $1,800/year academic: GUI-based DOE design, regression modeling, and visualization. The “Gaussian Process” platform is directly applicable to etch optimization. No programming required.

- MATLAB Statistics and Machine Learning Toolbox: Familiar to most engineers. The

fitrgp(Gaussian process regression) andbayesopt(Bayesian optimization) functions are powerful and well-documented. - Google Colab — Free: Cloud-based Jupyter notebooks with Python + scikit-learn pre-installed. Good for trying out ML workflows before committing to a local installation.

Intermediate Level (Basic Python Familiarity)

- Python + scikit-learn — Free: The most versatile open-source ML library. Key functions:

GaussianProcessRegressor,RandomForestRegressor,cross_val_score. - BoTorch / Ax (Meta) — Free: State-of-the-art Bayesian optimization framework. Supports multi-objective optimization natively — ideal for balancing competing etch metrics.

- Optuna — Free: Lightweight optimization framework with automatic visualization of parameter importance and optimization history.

Advanced Level (ML/Data Science Background)

- PyTorch / TensorFlow — Free: For custom neural network models (virtual metrology, endpoint detection, profile prediction).

- Weights & Biases (wandb) — Free for academics: Experiment tracking platform for ML training runs.

- COMSOL Multiphysics + ML coupling: For physics-informed ML approaches. COMSOL’s plasma module can generate synthetic training data for feature-scale ML models.

A Step-by-Step Workflow for Your Lab

- Define your objective. What etch metrics matter most? Etch rate? Selectivity? Profile angle? Surface roughness? Define 2–3 key outputs to optimize.

- Identify variable parameters. Select 4–6 recipe parameters to vary. Keep other parameters fixed.

- Design initial experiments. Use a space-filling design (Latin hypercube or Sobol sequence) to place 15–30 initial experiments across the parameter space.

- Run experiments and measure. Execute the initial set. Record process sensor data (OES spectra, RF power/impedance, pressure traces) if available.

- Train initial model. Fit a Gaussian process or random forest model. Evaluate with leave-one-out cross-validation. If R² < 0.7, add more experiments or re-examine parameter ranges.

- Iterate with Bayesian optimization. Use the model to suggest the next 3–5 experiments. Run them, retrain, and repeat. Typically 2–4 iteration rounds suffice.

- Validate. Run 3–5 replicates at the predicted optimal conditions to verify predictions and assess process repeatability.

- Deploy and maintain. Periodically retrain with new data as chamber conditions evolve.

Data Management Best Practices

One of the biggest barriers to applying ML in research labs is not the algorithms — it is the data. Here are practical recommendations:

Structured Data Collection

Create a standardized data template for every etch run:

| Field | Example | Notes |

|---|---|---|

| Run ID | 2026-03-13-001 | Date + sequential number |

| Recipe name | SiO2_ICP_v3.2 | Version-controlled recipe |

| ICP Power (W) | 600 | Actual measured, not setpoint |

| Pressure (mTorr) | 15 | Actual measured average |

| Gas flows (sccm) | CF₄: 45, O₂: 5 | All gases |

| Etch rate (nm/min) | 185 | Method: ellipsometry |

| Uniformity (%) | 2.8 | 1σ, 49-point map |

| Wafers since clean | 15 | Chamber conditioning state |

Common Data Pitfalls

- Missing chamber state data: Always record wafers-since-clean, RF-on hours, and recent maintenance. Chamber condition is the #1 hidden variable that causes model degradation.

- Inconsistent metrology: If one researcher uses 5-point ellipsometry maps and another uses 49-point maps, the uniformity data is not comparable. Standardize measurement protocols.

- Setpoint vs. actual values: Always record actual measured values from tool logs, not recipe setpoints. A recipe calling for 600 W ICP power may deliver 585 W.

- Unlabeled process changes: If you changed the gas bottle, replaced an electrode, or performed maintenance, record it. These events create discontinuities that confuse ML models.

Worked Example: Bayesian Optimization of SiO₂ Etch

Problem Setup

Suppose you need to optimize an ICP-RIE process for SiO₂ etching. Your target metrics are: etch rate > 200 nm/min, uniformity < 3% (1σ), and selectivity to Si > 10:1. Variable parameters: ICP power (300–800 W), bias power (50–200 W), pressure (5–30 mTorr), and CF₄ flow (20–80 sccm), with O₂ flow fixed at 5 sccm and chuck temperature at 20°C.

Step 1: Initial Experiment Design

# Generate a Latin Hypercube design for initial experiments

import numpy as np

from pyDOE2 import lhs

import pandas as pd

# Define parameter ranges

params = {

'ICP_power': (300, 800), # Watts

'Bias_power': (50, 200), # Watts

'Pressure': (5, 30), # mTorr

'CF4_flow': (20, 80), # sccm

}

# Generate 20 experiments using Latin Hypercube Sampling

n_experiments = 20

design = lhs(len(params), samples=n_experiments, criterion='maximin')

# Scale to actual parameter ranges

experiments = pd.DataFrame()

for i, (name, (lo, hi)) in enumerate(params.items()):

experiments[name] = design[:, i] * (hi - lo) + lo

# Round to practical values

experiments['ICP_power'] = experiments['ICP_power'].round(-1)

experiments['Bias_power'] = experiments['Bias_power'].round(-1)

experiments['Pressure'] = experiments['Pressure'].round(0)

experiments['CF4_flow'] = experiments['CF4_flow'].round(0)Step 2: Train Model and Optimize

# Bayesian optimization using scikit-learn Gaussian Process

from sklearn.gaussian_process import GaussianProcessRegressor

from sklearn.gaussian_process.kernels import Matern

from sklearn.model_selection import cross_val_score

from scipy.stats import norm

from scipy.optimize import differential_evolution

# Fit Gaussian Process model

kernel = Matern(nu=2.5, length_scale=0.5,

length_scale_bounds=(0.01, 10))

gp = GaussianProcessRegressor(

kernel=kernel, n_restarts_optimizer=10, alpha=1e-2)

gp.fit(X_norm, y_rate)

# Expected Improvement acquisition function

def expected_improvement(X_new, gp, y_best, xi=0.01):

mu, sigma = gp.predict(

X_new.reshape(1, -1), return_std=True)

imp = mu - y_best - xi

Z = imp / sigma

ei = imp * norm.cdf(Z) + sigma * norm.pdf(Z)

return -ei # negative because we minimize

# Find next experiment

bounds = [(0, 1)] * 4

result = differential_evolution(

lambda x: expected_improvement(x, gp, y_rate.max()),

bounds)Typical Results

| Stage | Etch Rate | Uniformity | Selectivity |

|---|---|---|---|

| Initial 20 experiments | 210 nm/min | 4.2% | 8.5:1 |

| After 5 BO iterations (25 total) | 225 nm/min | 2.6% | 11.3:1 |

| After 10 BO iterations (30 total) | 238 nm/min | 2.1% | 12.8:1 |

Figure 1: ML-Driven Etch Optimization Workflow — from initial experimental design through Bayesian optimization to validated recipes

Challenges and Limitations

Data quality matters more than data quantity. A small dataset with accurate, well-controlled measurements is far more valuable than a large dataset with inconsistent metrology. Before applying ML, ensure your measurement repeatability is adequate.

ML models are interpolators, not extrapolators. They work well within the parameter range covered by training data but can produce unreliable predictions outside that range. Always validate predictions that approach the boundaries of your experimental space.

Physical intuition remains essential. ML can identify optimal conditions, but understanding why a process works requires domain knowledge. Use ML as a complement to — not a replacement for — etch process fundamentals.

Chamber state variability. ML models trained on one chamber state may not generalize to a different state. Include chamber conditioning information in your dataset if possible.

Overfitting risk. With small datasets (< 30 points) and many parameters, overfitting is a real concern. Gaussian processes are naturally resistant to overfitting due to their Bayesian formulation, making them a good default choice for small-data etch optimization.

The Road Ahead

Automated experimentation: Closed-loop systems where ML algorithms design experiments, execute them on the tool, measure results, and iterate — all with minimal human intervention. These “self-driving labs” could reduce the time from new material to optimized recipe from weeks to days.

Physics-informed ML: Hybrid models that embed known physics (e.g., Arrhenius rate dependencies, sheath models, ion angular distributions) as constraints within ML frameworks. These models require less training data and generalize better than pure data-driven approaches.

Federated learning across tools: ML models trained on data from multiple etch chambers, potentially across different labs, without sharing raw data.

Foundation models for semiconductor processing: Large-scale models pre-trained on diverse process data that can be fine-tuned for specific etch applications with minimal additional data.

ML-guided ALE development: Applying Bayesian optimization specifically to the challenging parameter space of atomic layer etching could significantly accelerate ALE recipe development for new materials. See our related article: Atomic Layer Etching (ALE): A Practical Guide for Research and Development.

Self-Driving Laboratories

The concept of a “self-driving lab” — where an ML algorithm designs experiments, an automated system executes them, and the results feed back into the model without human intervention — is no longer theoretical. Researchers have coupled Bayesian optimization engines with automated ICP-RIE systems and inline ellipsometry. In one demonstration, a system autonomously ran 48 etch experiments over a weekend, optimizing a 5-parameter Si₃N₄ etch recipe from scratch to within 3% of the best-known recipe.

For research labs, a practical first step toward self-driving capability is automating the Bayesian optimization loop: have the ML model suggest the next experiment, automatically generate the recipe file, load it onto the tool, and import the metrology results after the run. This semi-automated workflow can reduce the time for a 30-experiment optimization from 2 weeks to 2 days.

Conclusion

Machine learning is no longer a distant promise for plasma etch process development — it is a practical tool that can deliver immediate value in research labs. By reducing the number of experiments needed for recipe optimization, enabling real-time process monitoring, and providing predictive capability that traditional approaches cannot match, ML helps researchers spend less time on trial-and-error and more time on the science that matters.

NineScrolls’ etching and deposition systems are designed with comprehensive process data logging and diagnostic capabilities, providing the foundation for data-driven process optimization. Contact us to learn how our systems can support your smart manufacturing research.

Related Articles in This Series

- Atomic Layer Etching (ALE) — precision etching where ML-optimized recipes add significant value

- The Selectivity Challenge — achieving ultra-high etch selectivity with data-driven optimization

- Etching Beyond Silicon — plasma processing challenges where ML accelerates recipe development

Frequently Asked Questions

How many experiments do I need to get started with ML-based etch optimization?

You can start with as few as 15–20 well-designed experiments using a Latin hypercube or Sobol sequence to cover the parameter space. This is enough to train an initial Gaussian process model and begin Bayesian optimization iterations. Studies show that Bayesian optimization typically finds near-optimal recipes in 25–35 total experiments — 3–5× fewer than conventional DOE.

Do I need programming experience to use ML for etch optimization?

No. GUI-based tools like JMP (SAS) provide Gaussian process regression and DOE design without any programming. MATLAB’s bayesopt function requires only a few lines of code. For researchers comfortable with basic Python, scikit-learn and Google Colab (free, cloud-based) offer powerful ML capabilities with extensive tutorials.

What sensor data from my etch tool is most useful for ML?

Optical emission spectroscopy (OES) data is the most valuable — it provides hundreds of wavelength channels that capture real-time plasma chemistry information. RF power and impedance data, chamber pressure traces, and gas flow logs are also useful. The key is to start saving and organizing it systematically, including chamber state metadata like wafers-since-clean and maintenance history.

Can ML models transfer between different etch chambers?

Yes, through transfer learning. A digital twin trained on one reference chamber can be fine-tuned with just 10–15 runs on a second chamber, achieving recipe transfer with < 2% etch rate deviation. Without ML, directly copying recipes between nominally identical chambers typically results in 5–8% deviation.

What is the biggest mistake labs make when starting with ML for etch processes?

Poor data management. Etch process data is often scattered across tool logs, lab notebooks, and individual files with inconsistent formats. The most impactful first step is creating a standardized data template that records actual measured values (not setpoints), includes chamber conditioning state, and is consistently used by all researchers.

References and Further Reading

- Oehrlein, G. S., et al. “Future of plasma etching for microelectronics: Challenges and opportunities.” J. Vac. Sci. Technol. B 42, 041501 (2024). doi:10.1116/6.0003579

- Zhu, H., et al. “Machine learning in semiconductor manufacturing: Overview and challenges.” IEEE Trans. Semicond. Manuf. 36, 3 (2023).

- Cowen, B., et al. “Machine learning approaches for plasma etching: A review.” J. Vac. Sci. Technol. A 40, 043001 (2022).

- Snoek, J., et al. “Practical Bayesian optimization of machine learning algorithms.” NeurIPS (2012).

- Kanarik, K. J., et al. “Human–machine collaboration for improving semiconductor process development.” Nature 616, 707 (2023).